AI Frontiers Conference

Showcasing the Frontier Technologies of AI

Deployed in Large Scale

San Jose Convention Center

November 9-11, 2018

Our Speakers

Ilya Sutskever

Co-Founder & DirectorOpenAI Ilya SutskeverCo-Founder & DirectorOpenAI

Ilya SutskeverCo-Founder & DirectorOpenAIDay 19:00 - 9:50amRecent Advances in Deep Learning and AI from OpenAI (Slides)I will present several advances in deep learning from OpenAI. First, I will present OpenAI Five, a neural network that learned to play on par with some of the strongest professional Dota 2 teams in the world in an 18-hero version of the game. Next, I will present Dactyl, a human-like robot hand trained entirely in simulation with reinforcement learning that has achieved unprecedented dexterity on a physical robot. I will also present our results on unsupervised learning in language, that show that pre-training and finetuning can achieve a significant improvement over state of the art. Finally, I will present an overview of the historical progress in the field.

Speaker BioIlya Sutskever received his Ph.D. in computer science from the University of Toronto, under the supervision of Geoffrey Hinton. He was a postdoctoral fellow with Andrew Ng at Stanford University for a brief period, after which he dropped out to co-found DNNResearch which Google acquired the following year. Sutskever joined the Google Brain team as a research scientist, where he developed the Sequence to Sequence model, contributed to the design of TensorFlow, and helped establish the Brain Residency Program. He is a co-founder of OpenAI, where he currently serves as research director.

Sutskever has made many contributions to the field of Deep Learning, including the convolutional neural network that convinced the computer vision community of the power of deep learning by winning the 2012 ImageNet competition. He was listed in MIT Technology Review’s 35 innovators under 35.

Sutskever has made many contributions to the field of Deep Learning, including the convolutional neural network that convinced the computer vision community of the power of deep learning by winning the 2012 ImageNet competition. He was listed in MIT Technology Review’s 35 innovators under 35.

Jay Yagnik

VPGoogle AI Jay YagnikVPGoogle AI

Jay YagnikVPGoogle AIDay 17:00 - 9:00pmA History Lesson on AI (Slides)We have reached a remarkable point in history with the evolution of AI, from applying this technology to incredible use cases in healthcare, to addressing the world's biggest humanitarian and environmental issues. Our ability to learn task-specific functions for vision, language, sequence and control tasks is getting better at a rapid pace. This talk will survey some of the current advances in AI, compare AI to other fields that have historically developed over time, and calibrate where we are in the relative advancement timeline. We will also speculate about the next inflection points and capabilities that AI can offer down the road, and look at how those might intersect with other emergent fields, e.g. Quantum computing.

Speaker BioJay Yagnik is currently a Vice President and Engineering Fellow at Google, leading large parts of Google AI. While at Google he has led many foundational research efforts in machine learning and perception, computer vision, video understanding, privacy preserving machine learning, quantum AI, applied sciences, and more. He has also created multiple engineering and product successes for the company, in areas including Google Photos, YouTube, Search, Ads, Android, Maps, and Hardware. Jay’s research interests span the fields of deep learning, reinforcement learning, scalable matching, graph information propagation, image representation and recognition, temporal information mining, and sparse networks. He is an alumnus of the Indian Institute of Science and the Institute of Technology, Nirma University for graduate and undergraduate studies.

Pieter Abbeel

ProfessorUC Berkeley Pieter AbbeelProfessorUC Berkeley

Pieter AbbeelProfessorUC BerkeleyDay 29:40 - 10:40amDeep Learning for RoboticsProgramming robots remains notoriously difficult. Equipping robots with the ability to learn would by-pass the need for what otherwise often ends up being time-consuming task specific programming. This talk will describe recent progress in deep reinforcement learning (robots learning through their own trial and error), in apprenticeship learning (robots learning from observing people), and in meta-learning for action (robots learning to learn). This work has led to new robotic capabilities in manipulation, locomotion, and flight.

Speaker BioPieter Abbeel is Professor and Director of the Robot Learning Lab at UC Berkeley [2008- ], Co-Founder of covariant.ai [2017- ], Co-Founder of Gradescope [2014- ], Advisor to OpenAI, Founding Faculty Partner AI@TheHouse, Advisor to many AI/Robotics start-ups. He works in machine learning and robotics. In particular his research focuses on making robots learn from people (apprenticeship learning), how to make robots learn through their own trial and error (reinforcement learning), and how to speed up skill acquisition through learning-to-learn (meta-learning). His robots have learned advanced helicopter aerobatics, knot-tying, basic assembly, organizing laundry, locomotion, and vision-based robotic manipulation. He has won numerous awards, including best paper awards at ICML, NIPS and ICRA, early career awards from NSF, Darpa, ONR, AFOSR, Sloan, TR35, IEEE, and the Presidential Early Career Award for Scientists and Engineers (PECASE). Pieter's work is frequently featured in the popular press, including New York Times, BBC, Bloomberg, Wall Street Journal, Wired, Forbes, Tech Review, NPR.

Matt Feiszli

Research ManagerFacebook Matt FeiszliResearch ManagerFacebook

Matt FeiszliResearch ManagerFacebookDay 110:00 - 11:30amVideo Understanding: Modalities, Time, and Scale (Slides)I will discuss the state of the art of video understanding, particularly its research and applications at Facebook. I will focus on two active areas: multimodality and time. Video is naturally multi-modal, offering great possibility for content understanding while also opening new doors like unsupervised and weakly-supervised learning at scale. Temporal representation remains a largely open problem; while we can describe a few seconds of video, there is no natural representation for a few minutes of video. I will discuss recent progress, the importance of these problems for applications, and what we hope to achieve.

Speaker BioMatt Feiszli, Facebook Research Scientist Manager, leads computer vision efforts on video understanding at Facebook. He was previously leading video understanding as Senior Machine Learning Scientist at Sentient Technologies and also spent four years at Yale University as Gibbs Assistant Professor in mathematics. He received his Ph.D. of Applied Mathematics from Brown University with David Mumford and has undergraduate degrees in Computer Science and Psychology from Yale University.

Percy Liang

Assistant ProfessorStanford University Percy LiangAssistant ProfessorStanford University

Percy LiangAssistant ProfessorStanford UniversityDay 11:00 - 1:30pmPushing the Limits of Machine LearningIn recent years, machine learning has undoubtedly been hugely successful in driving progress in AI applications. However, as we will explore in this talk, even state-of-the-art systems have "blind spots" which make them generalize poorly out of domain and render them vulnerable to adversarial examples. We then suggest that more unsupervised learning settings can encourage the development of more robust systems. We show positive results on two tasks: (i) text style and attribute transfer, the task of converting a sentence with one attribute (e.g., sentiment) to one with another; and (ii) solving SAT instances (classical problems requiring logical reasoning) using end-to-end neural networks.

Speaker BioPercy Liang is an Assistant Professor of Computer Science at Stanford University (B.S. from MIT, 2004; Ph.D. from UC Berkeley, 2011). His research spans machine learning and natural language processing, with the goal of developing trustworthy agents that can communicate effectively with people and improve over time through interaction. Specific topics include question answering, dialogue, program induction, interactive learning, and reliable machine learning. His awards include the IJCAI Computers and Thought Award (2016), an NSF CAREER Award (2016), a Sloan Research Fellowship (2015), and a Microsoft Research Faculty Fellowship (2014).

Quoc Le

Research ScientistGoogle Brain Quoc LeResearch ScientistGoogle Brain

Quoc LeResearch ScientistGoogle BrainDay 11:30 - 2:30pmUsing Machine Learning to Automate Machine Learning (Slides)Traditional machine learning systems are hand-designed and tuned by machine learning experts. To scale up the impact of machine learning to many real-world applications, we must figure out a way to automate the designing process of these pipelines. In this talk, I will discuss the use of machine learning to automate the process of designing neural architectures and data augmentation strategies (Neural Architecture Search and AutoAugment).

Speaker BioQuoc is a researcher at Google Brain. He is an early member of the Google Brain team and known for his work on large scale deep learning, sequence to sequence learning (seq2seq), Google's Neural Machine Translation System (GNMT), and automated machine learning (automl). Prior to Google Brain, Quoc did his PhD at Stanford. He was recognized at one of top innovators in 2014 by MIT Technology Review.

Sumit Gulwani

Partner Research ManagerMicrosoft Sumit GulwaniPartner Research ManagerMicrosoft

Sumit GulwaniPartner Research ManagerMicrosoftDay 11:30 - 2:30pmProgramming by Examples (Slides)Programming by examples (PBE) is a new frontier in AI that enables users to create scripts from input-output examples. A killer application is in the space of data wrangling to automate tasks like string/number/date transformations (e.g., converting “FirstName LastName” to “LastName, FirstName”), column splitting, table extraction from log-files, webpages, and PDFs, normalizing semi-structured spreadsheets into structured tables, transforming JSON from one format to another, etc. This presentation will educate the audience about this new PBE-based programming paradigm: its applications, form factors inside different products, and the science behind it.

Speaker BioSumit Gulwani is a research manager at Microsoft, where he leads the PROSE research and engineering team that develops APIs for program synthesis (programming by examples and natural language) and incorporates them into real products. He is the inventor of the popular Flash Fill feature in Microsoft Excel, used by hundreds of millions of people. He has published 120+ peer-reviewed papers in top-tier conferences and journals across multiple computer science areas, delivered 40+ keynotes and invited talks at various forums, and authored 50+ patent applications (granted and pending). Sumit is a recipient of the prestigious ACM SIGPLAN Robin Milner Young Researcher Award, ACM SIGPLAN Outstanding Doctoral Dissertation Award, and the President’s Gold Medal from IIT Kanpur.

Divya Jain

DirectorAdobe Divya JainDirectorAdobe

Divya JainDirectorAdobeDay 110:00 - 11:30amVideo Summarization (Slides)As video content is becoming mainstream, video summarization is becoming a hot research topic in academia and industry. Video thumbnail generation and summarization has been worked on for years, but deep learning and reinforcement learning is changing the landscape and emerging as the winner for optimal frame selection. Recent advances in GANs are improving the quality, aesthetics and relevancy of the frames to represent the original videos. Come join this session to get an understanding of various challenges and emerging solutions around video summarization.

Speaker BioDivya Jain is an industry recognized product and technology leader in machine learning and AI. She has 15+ years of industry experience at various startups and Fortune 500 companies. She is currently serving as an Engineering Director for Sensei ML platform at Adobe. Before this she was a Research Director at Tyco Innovation Garage and led various deep learning initiatives in video surveillance space. She also co-founded a startup, dLoop Inc., which was acquired by Box in 2013. At Box, Divya led the team that built the first machine learning capabilities into the Box platform. She is very passionate about open sharing of knowledge and information and always working towards abridging technology gap for product innovation.

Roland Memisevic

Chief ScientistTwentyBN Roland MemisevicChief ScientistTwentyBN

Roland MemisevicChief ScientistTwentyBNDay 110:00 - 11:30amTeaching machines common sense understanding of the world around them (Slides)In this talk, I will introduce an AI system that interacts with you while "looking" at you - to understand your behaviour, your surroundings and the full context of the engagement. At the core of this technology is a crowd acting-platform, that allows humans to engage with and teach the system about everyday aspects of our lives and of our physical world. Combining this with deep neural networks makes it possible to generate a high degree human-like "awareness" of everyday scenes and situations. I will describe how this technology allows devices, ranging from information kiosks to cars, to engage with humans more naturally and instinctively, and how TwentyBN uses this ability to create commercial value for our customers.

Speaker BioRoland Memisevic received the PhD in Computer Science from the University of Toronto in 2008 doing research on neural networks. He subsequently held positions as a research scientist at PNYLab, Princeton, as a post-doc at the University of Toronto and at ETH Zurich, and as junior professor at the University of Frankfurt. In 2012 he joined the MILA deep learning group at the University of Montreal as an assistant professor. Since 2016 he has been Chief Scientist at the Canadian-German AI startup Twenty Billion Neurons, which he co-founded in 2015. Roland was named Fellow of the Canadian Institute for Advanced Research (CIFAR) in 2015. His research interests are in deep and recurrent neural networks, in particular, as applied to video understanding.

Mario Munich

SVP TechnologyiRobot Mario MunichSVP TechnologyiRobot

Mario MunichSVP TechnologyiRobotDay 29:40 - 10:40amConsumer robotics: embedding affordable AI in everyday life (Slides)The availability of affordable electronics components, powerful embedded microprocessors, and ubiquitous internet access and WiFi in the household has enabled a new generation of connected consumer robots. In 2015, iRobot launched the Roomba 980, introducing intelligent visual navigation to its successful line of vacuum cleaning robots. In 2018, iRobot launched the Roomba i7, equipped with the latest mapping and navigation technology that provides spatial information to the broader ecosystem of connected devices in the home. In this talk, I will describe the challenges and the potential of introducing consumer robots capable of developing spatial context by exploring the physical space of the home, and I will elaborate on the impact of AI in the future of robotics applications. Moreover, I will describe our vision of the Smart Home, an AI-powered home that maintains itself and magically just does the right thing in anticipation of occupant needs. This home will be built on an ecosystem of connected and coordinated robots, sensors, and devices that provides the occupants with a high quality of life by seamlessly responding to the needs of daily living – from comfort to convenience to security to efficiency.

Junling Hu

CEOQuestion.ai Junling HuCEOQuestion.ai

Junling HuCEOQuestion.aiDay 18:50 - 9:00amOpening Remarks

by Junling Hu, Conference Chair

by Junling Hu, Conference Chair

Speaker BioJunling Hu is the founder and CEO of question.ai. She is also the Chair of AI Frontiers Conference (https://aifrontiers.com). Before starting her company, she was Director of Data Mining at Samsung, where she led a team to build large-scale recommender systems. Prior to Samsung, Dr. Hu led data science teams at PayPal and eBay, providing machine learning solution to company-wide operation, ranging from product search ranking, sales prediction, user opinion mining to targeted marketing. Dr. Hu has more than 1,000 scholarly citations on her papers. She is a recipient of CAREER award from National Science Foundation, for her work on Multi-agent Reinforcement Learning. She holds a Ph.D. in AI from University of Michigan at Ann Arbor.

Li Deng

Chief AI OfficerCitadel Li DengChief AI OfficerCitadel

Li DengChief AI OfficerCitadelDay 13:15 - 4:30pmFrom Modeling Speech and Language to Modeling Financial Markets (Slides)I will first survey how deep learning has disrupted speech and language processing industries since 2009. Then I will draw connections between the techniques for modeling speech and language and those for financial markets. Finally, I will address three unique technical challenges to financial investment.

Speaker BioLi Deng joined Citadel as its Chief AI Officer in May 2017. Previously, he was chief scientist of AI and partner research manager at Microsoft; he has also been a professor at the University of Waterloo in Ontario and held teaching/research positions at MIT (Cambridge), ATR (Kyoto, Japan), and HKUST (Hong Kong). He is a fellow of the IEEE, the Acoustical Society of America, and the ISCA. He has also been an affiliate professor at University of Washington since 2000. In recognition of the pioneering work on disrupting speech recognition industry using large-scale deep learning, he received the 2015 IEEE SPS Technical Achievement Award for Outstanding Contributions to Automatic Speech Recognition and Deep Learning. He also received numerous best paper and patent awards for contributions to artificial intelligence, machine learning, information retrieval, multimedia signal processing, speech processing, and human language technology. Deng is an author or coauthor of six technical books on deep learning, speech processing, discriminative machine learning, and natural-language processing.

Ashok Srivastava

Chief Data OfficerIntuit Ashok SrivastavaChief Data OfficerIntuit

Ashok SrivastavaChief Data OfficerIntuitDay 13:15 - 4:30pmUsing AI to Solve Complex Economic Problems (Slides)Nearly half of all small businesses fail within their first 5 years. However, AI-driven solutions can help solve complex economic problems for consumers and small businesses like missed bill payments, insufficient capital, overinvestment in fixed assets, and more.

Ashok Srivastava discusses technology's role in solving economic problems and details how Intuit is using its unrivaled financial dataset to power prosperity around the world. Leveraging technology enablers like deep learning, natural language processing, and automated reasoning and combining with a delightful end-user experience and sophisticated UX, Intuit is using technology to help its users have more confidence in their financial management.

Ashok Srivastava discusses technology's role in solving economic problems and details how Intuit is using its unrivaled financial dataset to power prosperity around the world. Leveraging technology enablers like deep learning, natural language processing, and automated reasoning and combining with a delightful end-user experience and sophisticated UX, Intuit is using technology to help its users have more confidence in their financial management.

Speaker BioAshok N. Srivastava is the senior vice president and chief data officer at Intuit, where he is responsible for setting the vision and direction for large-scale machine learning and AI across the enterprise to help power prosperity across the world-and in the process is hiring hundreds of people in machine learning, AI, and related areas at all levels. Ashok has extensive experience in research, development, and implementation of machine learning and optimization techniques on massive datasets and serves as an advisor in the area of big data analytics and strategic investments to companies including Trident Capital and MyBuys. Previously, Ashok was vice president of big data and artificial intelligence systems and the chief data scientist at Verizon, where his global team focused on building new revenue-generating products and services powered by big data and artificial intelligence; senior director at Blue Martini Software; and senior consultant at IBM. He is an adjunct professor in the Electrical Engineering Department at Stanford and is the editor-in-chief of the AIAA Journal of Aerospace Information Systems. Ashok is a fellow of the IEEE, the American Association for the Advancement of Science (AAAS), and the American Institute of Aeronautics and Astronautics (AIAA). He has won numerous awards, including the Distinguished Engineering Alumni Award, the NASA Exceptional Achievement Medal, the IBM Golden Circle Award, the Department of Education Merit Fellowship, and several fellowships from the University of Colorado. Ashok holds a PhD in electrical engineering from the University of Colorado at Boulder.

Yazann Romahi

Chief Investment OfficerJP Morgan Chase Yazann RomahiChief Investment OfficerJP Morgan Chase

Yazann RomahiChief Investment OfficerJP Morgan ChaseDay 13:15 - 4:30pmThe Pitfalls of Using AI in Trading Strategies (Slides)Because of the success of momentum based strategies, most AI practitioners come into finance thinking they can achieve easy wins by applying AI to time series analysis. We outline how this can be a trap, and other common misconceptions about AI in finance. We discuss the value of new sources of data and how we have used them successfully. By way of example we walk through an application of natural language processing to enhance our equity long/short and event driven hedge fund strategies.

Speaker BioYaz Romahi, PhD, CFA, managing director, is the Chief Investment Officer, Quantitative Beta Strategies at JPMorgan Asset Management focused on building the firm's factor-based franchise across both alternative beta and strategic beta. Prior to that he was Head of Research and Quantitative Strategies in Multi Asset Solutions, responsible for the quantitative models that help establish the broad asset allocation reflected across Multi-Asset Solutions portfolios globally. Prior to joining J.P. Morgan in 2003, Yaz worked as a research analyst at the Centre for Financial Research at the University of Cambridge and undertook consulting assignments for a number of financial institutions including Pioneer Asset Management, PricewaterhouseCoopers and HSBC. Yaz holds a PhD in Computational Finance/Artificial Intelligence from the University of Cambridge and an MSc in Artificial Intelligence from the University of Edinburgh.

Rajarshi Gupta

Head of AIAvast Rajarshi GuptaHead of AIAvast

Rajarshi GuptaHead of AIAvastDay 111:30 - 12:00pmSecurity is AI’s biggest challenge, and AI is Security’s greatest opportunity (Slides)The progress of AI in the last decade has seemed almost magical. But we will discuss the unique challenges posed by Security and what makes this domain the biggest challenge for AI. Reporting from the frontlines, we will describe the deployment of large-scale production-grade AI systems to combat security breaches, using lessons learned at Avast from defending over 400 million consumers every single day. Topics will cover the recent AI advancements in file-based anti-malware solutions, behavior-based on-device solutions, and network-based IoT security solutions.

Speaker BioRajarshi Gupta is the Head of AI at Avast Software, one of the largest consumer security companies in the world. He has a PhD in EECS from UC Berkeley and has built a unique expertise at the intersection of Artificial Intelligence, Cybersecurity and Networking. Prior to joining Avast, Rajarshi worked for many years at Qualcomm Research, where he created ‘Snapdragon Smart Protect’, the first ever product to achieve On-Device Machine Learning for Security. Rajarshi loves to work on innovative problems and has authored over 175 issued U.S. Patents.

Roger Roberts

PartnerMcKinsey & Company Roger RobertsPartnerMcKinsey & Company

Roger RobertsPartnerMcKinsey & CompanyDay 12:30 - 2:45pmAI for Societal GoodBeyond its transformative role in business and the economy, AI is starting to be deployed for uses that benefit individuals and society, from helping detect cancers to tailoring education for autistic students or predicting which homes have lead in their water pipes. This session, based on McKinsey Global Institute’s analysis of about 160 social impact use cases, will examine domains where AI could be deployed for the benefit of society, factors that limit its deployment, and risks that will need to be mitigated if the benefits are to be realized.

Speaker BioRoger Roberts is a partner in McKinsey & Company’s Silicon Valley office where he leads the Consumer sector (online commerce, CPG and retail) for McKinsey’s Digital & Analytics Tech practice in North America, with a focus on innovation and tech-enabled transformation. He brings over 25 years of experience in helping clients conceive and apply technology solutions to enhance innovation and productivity. He has also led McKinsey research on the impact of IT on economic productivity, on the role of IT architecture as an enabler of flexible business strategies, and on the key business trends sparked by analytics and AI-enabled innovation. He has led McKinsey’s collaborations with a range of academic researchers and innovative companies on emerging technologies, and he has served on the Board of the Center for Digital Business at MIT. Roger joined McKinsey in 1992 and holds B.S. and M.S. degrees in Industrial Engineering from Stanford University, as well as an MBA from the MIT Sloan School of Management.

Anima Anandkumar

Director of Machine Learning ResearchNVIDIA Anima AnandkumarDirector of Machine Learning ResearchNVIDIA

Anima AnandkumarDirector of Machine Learning ResearchNVIDIADay 211:20 - 11:45pmLarge-scale Machine Learning: Deep, Distributed and Multi-Dimensional (Slides)As the data and models scale, it becomes necessary to have multiple processing units for both training and inference. SignSGD is a gradient compression algorithm that only transmits the sign of the stochastic gradients during distributed training. This algorithm uses 32 times less communication per iteration than distributed SGD. We show that signSGD obtains free lunch both in theory and practice: no loss in accuracy while yielding speedups. Pushing the current boundaries of deep learning also requires using multiple dimensions and modalities. These can be encoded into tensors, which are natural extensions of matrices. These functionalities are available in the Tensorly package with multiple backend interfaces for large-scale deep learning.

Speaker BioAnima Anandkumar is a Bren professor at Caltech CMS department and a director of machine learning research at NVIDIA. Her research spans both theoretical and practical aspects of large-scale machine learning. In particular, she has spearheaded research in tensor-algebraic methods, non-convex optimization, probabilistic models and deep learning.

Anima is the recipient of several awards and honors such as the Bren named chair professorship at Caltech, Alfred. P. Sloan Fellowship, Young investigator awards from the Air Force and Army research offices, Faculty fellowships from Microsoft, Google and Adobe, and several best paper awards. She was recently nominated to the World Economic Forum's Expert Network consisting of leading experts from academia, business, government, and the media. She has been featured in documentaries by PBS, KPCC, wired magazine, and in articles by MIT Technology review, Forbes, Yourstory, O’Reilly media, and so on.

Anima received her B.Tech in Electrical Engineering from IIT Madras in 2004 and her PhD from Cornell University in 2009. She was a postdoctoral researcher at MIT from 2009 to 2010, a visiting researcher at Microsoft Research New England in 2012 and 2014, an assistant professor at U.C. Irvine between 2010 and 2016, an associate professor at U.C. Irvine between 2016 and 2017 and a principal scientist at Amazon Web Services between 2016 and 2018.

Anima is the recipient of several awards and honors such as the Bren named chair professorship at Caltech, Alfred. P. Sloan Fellowship, Young investigator awards from the Air Force and Army research offices, Faculty fellowships from Microsoft, Google and Adobe, and several best paper awards. She was recently nominated to the World Economic Forum's Expert Network consisting of leading experts from academia, business, government, and the media. She has been featured in documentaries by PBS, KPCC, wired magazine, and in articles by MIT Technology review, Forbes, Yourstory, O’Reilly media, and so on.

Anima received her B.Tech in Electrical Engineering from IIT Madras in 2004 and her PhD from Cornell University in 2009. She was a postdoctoral researcher at MIT from 2009 to 2010, a visiting researcher at Microsoft Research New England in 2012 and 2014, an assistant professor at U.C. Irvine between 2010 and 2016, an associate professor at U.C. Irvine between 2016 and 2017 and a principal scientist at Amazon Web Services between 2016 and 2018.

Sameer Sharma

Global GM IoTIntel Sameer SharmaGlobal GM IoTIntel

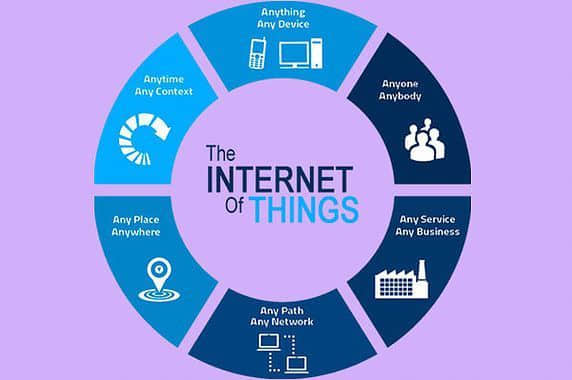

Sameer SharmaGlobal GM IoTIntelDay 14:30 - 5:30pmGoing for the Edge - AI @ IOTIOT implementations have moved from “Connect the Unconnected” to Smart Connected devices and solutions. The next big inflection point will be around autonomous systems. AI + Data will play a pivotal role in enabling this autonomy. This will play out in Intelligent factories, cities and buildings. We will use Computer Vision as an example to walk through this imminent step-function transition.

Speaker BioSameer Sharma is the Global GM (New Markets/Smart Cities) for IOT Solutions at Intel and a thought leader in IOT/Mobile ecosystem, having driven multiple strategic initiatives over the past 19 years. Sameer leads a global team that incubates and scales new growth categories and business models for Intel in IOT and Smart Cities. His team also focuses on establishing leadership across the industry playing a pivotal role in deploying solutions for the development of smart cities around the world—an important effort in furthering the goal of sustainability. These solutions include Intelligent Transportation, AI+Video, Air Quality Monitoring and Smart Lighting in cities. With far-reaching impact, each of these solutions are providing local governments a plethora of data to enhance the daily quality of life for citizens while simultaneously promoting responsible practices to protect the environment.

Sameer has an MBA from The Wharton School at UPenn, and a Masters in Computer Engineering from Rutgers University. He holds 11 patents in the areas of IOT and Mobile.

Sameer has an MBA from The Wharton School at UPenn, and a Masters in Computer Engineering from Rutgers University. He holds 11 patents in the areas of IOT and Mobile.

Himagiri Mukkamala

GM & SVPARM Himagiri MukkamalaGM & SVPARM

Himagiri MukkamalaGM & SVPARMDay 14:30 - 5:30pmThe fifth wave – AI driven computing for IoT (Slides)We are entering an era of data-driven computing – IoT will collect, 5G will transport it and ML will process it. The combination of these forces coming together will bring transformation unlike anything seen before. 5G alone is expected to bring economic impact akin to the industrial revolution. The IoT is predicated to bring trillions to many different industries. The fifth wave represents massive opportunity for the ecosystem to create new businesses and drive economic growth. Those 1T devices will not just be connected, they will be intelligent - a paradigm shift enabled by ML in every IoT end point. The bigger impact of AI is already happening today as machine learning is becoming part of our daily lives in billions of tiny moments. Machine Learning will be needed to handle the massive amount of data that will be part of the fifth wave. Data itself isn’t always valuable if the volume obscures the important info. We can use ML at the edge to identify the critical data that should be shared. This is why we as an industry need to focus on the edge for the future of AI. We’ve worked hard on moving data to processing somewhere else, but for a trillion devices, that approach isn’t sustainable. Moving, processing and storing data all has costs in terms of efficiency and security. We need to shift our focus to processing the data where it is collected and used driving more value from IoT.

Speaker BioHimagiri (Hima) Mukkamala is senior vice president and general manager for IoT Cloud Services at Arm. He's responsible for leading the organization to define and deliver IoT cloud services that connect, secure & manage IoT devices. Previously, he was responsible for leading the Predix™ Edge/Cloud Services engineering and technical product management organization to deliver high quality software using Agile/XP principles. In his previous role at Sybase, Hima led various groups in the Mobile Platform organization and played a significant part in the successful acquisition by SAP.

Kai-Fu Lee

Chairman & CEOSinovation Ventures Kai-Fu LeeChairman & CEOSinovation Ventures

Kai-Fu LeeChairman & CEOSinovation VenturesDay 15:30 - 6:00pmKeynote

Speaker BioHis latest book AI Superpowers (aisuperpowers.com) releasing fall 2018 discusses US-China co-leadership in the age of AI as well as the greater societal impacts brought upon by the AI technology revolution.

Dr. Kai-Fu Lee is the Chairman and CEO of Sinovation Ventures (www.sinovationventures.com) and President of Sinovation Venture’s Artificial Intelligence Institute. Sinovation Ventures, managing US$2 billion dual currency investment funds, is a leading venture capital firm focusing on developing the next generation of Chinese high-tech companies. Prior to founding Sinovation in 2009, Dr. Lee was the President of Google China. Previously, he held executive positions at Microsoft, SGI, and Apple. Dr. Lee received his Bachelor degree from Computer Science from Columbia University, Ph.D. from Carnegie Mellon University, as well as Honorary Doctorate Degrees from both Carnegie Mellon and the City University of Hong Kong. He is also a Fellow of the Institute of Electrical and Electronics Engineers (IEEE), and followed by over 50 million audience on social media.

In the field of artificial intelligence, Dr. Lee built one of the first game playing programs to defeat a world champion (1988, Othello), as well as the world’s first large-vocabulary, speaker-independent continuous speech recognition system. Dr. Lee founded Microsoft Research China, which was named as the hottest research lab by MIT Technology Review. Later renamed Microsoft Research Asia, this institute trained the great majority of AI leaders in China, including CTOs or AI heads at Baidu, Tencent, Alibaba, Lenovo, Huawei, and Haier. While with Apple, Dr. Lee led AI projects in speech and natural language, which have been featured on Good Morning America on ABC Television and the front page of Wall Street Journal. He has authored 10 U.S. patents, and more than 100 journal and conference papers. Altogether, Dr. Lee has been in artificial intelligence research, development, and investment for more than 30 years.

Dr. Kai-Fu Lee is the Chairman and CEO of Sinovation Ventures (www.sinovationventures.com) and President of Sinovation Venture’s Artificial Intelligence Institute. Sinovation Ventures, managing US$2 billion dual currency investment funds, is a leading venture capital firm focusing on developing the next generation of Chinese high-tech companies. Prior to founding Sinovation in 2009, Dr. Lee was the President of Google China. Previously, he held executive positions at Microsoft, SGI, and Apple. Dr. Lee received his Bachelor degree from Computer Science from Columbia University, Ph.D. from Carnegie Mellon University, as well as Honorary Doctorate Degrees from both Carnegie Mellon and the City University of Hong Kong. He is also a Fellow of the Institute of Electrical and Electronics Engineers (IEEE), and followed by over 50 million audience on social media.

In the field of artificial intelligence, Dr. Lee built one of the first game playing programs to defeat a world champion (1988, Othello), as well as the world’s first large-vocabulary, speaker-independent continuous speech recognition system. Dr. Lee founded Microsoft Research China, which was named as the hottest research lab by MIT Technology Review. Later renamed Microsoft Research Asia, this institute trained the great majority of AI leaders in China, including CTOs or AI heads at Baidu, Tencent, Alibaba, Lenovo, Huawei, and Haier. While with Apple, Dr. Lee led AI projects in speech and natural language, which have been featured on Good Morning America on ABC Television and the front page of Wall Street Journal. He has authored 10 U.S. patents, and more than 100 journal and conference papers. Altogether, Dr. Lee has been in artificial intelligence research, development, and investment for more than 30 years.

Apoorv Saxena

Global Head of AIJPMorgan Chase Apoorv SaxenaGlobal Head of AIJPMorgan Chase

Apoorv SaxenaGlobal Head of AIJPMorgan ChaseDay 29:00 - 9:05amDay 2 Opening Remark

by Apoorv Saxena, Conference Co-Chair

by Apoorv Saxena, Conference Co-Chair

Melissa Goldman

Chief Information OfficerJP Morgan Chase Melissa GoldmanChief Information OfficerJP Morgan Chase

Melissa GoldmanChief Information OfficerJP Morgan ChaseDay 29:05 - 9:40amMorning Keynote

Arnaud Thiercelin

Head of R&DDJI Arnaud ThiercelinHead of R&DDJI

Arnaud ThiercelinHead of R&DDJISpeaker BioArnaud Thiercelin is the Head of R&D of North America for DJI, overseeing global developer technologies and enterprise R&D projects. Within this role, he is responsible for driving the vision for DJI's developer technologies and enterprise solutions, managing teams located in Palo Alto and Shenzhen, China. Arnaud has more than 15 years of experience in software development from embedded systems to cloud infrastructure. Arnaud has been in the United States for nearly a decade and is originally from France where he studied at Epitech.

Wei Xu

Chief Scientist of General AIHorizon Robotics Wei XuChief Scientist of General AIHorizon Robotics

Wei XuChief Scientist of General AIHorizon RoboticsSpeaker BioWei Xu is the chief scientist of general AI at Horizon Robotics Inc., where focuses on artificial general intelligence research. As the world's top in-depth learning expert, Wei Xu has more than 20 years of research experience in the field of deep learning. From 2013 to 2018, he was a distinguished scientist at Baidu Inc., where he started Baidu's open source deep learning framework PaddlePaddle and led general AI research. From 2009 to 2013, he was a research scientist at Facebook, where he developed and deployed recommendation system capable of handling billions of objects and billion+ users. From 2001 to 2009, he was a researcher at NEC Laboratories, where he developed convolutional neural networks for a variety of visual understanding tasks and deployed them to the surveillance systems of many US airports. Wei was listed as one of the 20 leading technologists driving China's AI revolution by a Forbes article.

Nikhil Krishnan

Group VP, ProductsC3 IoT Nikhil KrishnanGroup VP, ProductsC3 IoT

Nikhil KrishnanGroup VP, ProductsC3 IoTDay 21:00 - 2:00pmAI-Based Stochastic Supply Chain OptimizationOver the years, manufacturing companies have deployed Material Requirements Planning (MRP) software solutions that support production planning, and automated inventory and supplier management. However, most MRP software solutions were never designed to leverage emerging technologies of Big Data, and AI/Machine Learning – to better optimize supply chains.

In this talk, we explain how C3 Inventory Optimization applies new technologies and approaches to optimize inventory levels, improve service levels, and proactively manage supplier delays. C3 Inventory Optimization is able to manage several real-world uncertainties including variability in demand, supplier delivery times, quality issues with parts delivered by suppliers, and production line disruptions. C3 Inventory Optimization is particularly relevant to manufacturers of sophisticated equipment that are highly configurable, or manufacturers with a large number of SKUs – that are often dealing with significant complexity.

In this talk, we explain how C3 Inventory Optimization applies new technologies and approaches to optimize inventory levels, improve service levels, and proactively manage supplier delays. C3 Inventory Optimization is able to manage several real-world uncertainties including variability in demand, supplier delivery times, quality issues with parts delivered by suppliers, and production line disruptions. C3 Inventory Optimization is particularly relevant to manufacturers of sophisticated equipment that are highly configurable, or manufacturers with a large number of SKUs – that are often dealing with significant complexity.

Sarah Guo

General PartnerGreylock Partners Sarah GuoGeneral PartnerGreylock Partners

Sarah GuoGeneral PartnerGreylock PartnersDay 24:00 - 4:20pmInvesting in AI Startups

Speaker BioSarah is interested in almost everything where technology can be used as a weapon to get us to the future, faster. She spends a lot of her time thinking about opportunities in B2B applications and infrastructure, cyber security, artificial intelligence, augmented reality and healthcare.

Sarah joined Greylock Partners as an investor in 2013. She led Greylock’s investment in Cleo and is on the board of Cleo and Obsidian and also works closely with Awake, Crew, Rhumbix and Skyhigh. Prior to joining Greylock, Sarah was at Goldman Sachs, where she invested in growth-stage technology startups such as Dropbox, and advised pre-IPO technology companies such as Workday (as well as public clients including Zynga, Netflix and Nvidia). Previously, Sarah worked with Casa Systems (NASDAQ:CASA), a publicly traded technology company that develops a software-centric networking platform for cable and mobile service providers.

She is an advocate for STEM education for women and the underserved. She has taught Marketing in the Wharton Undergraduate Program and served as a teaching fellow in lower-income high schools for the Philadelphia World Affairs Council. Sarah has four degrees from the Wharton School and the University of Pennsylvania. She is part of Linkedin’s Next Wave and the Forbes’ 30 Under 30.

Sarah joined Greylock Partners as an investor in 2013. She led Greylock’s investment in Cleo and is on the board of Cleo and Obsidian and also works closely with Awake, Crew, Rhumbix and Skyhigh. Prior to joining Greylock, Sarah was at Goldman Sachs, where she invested in growth-stage technology startups such as Dropbox, and advised pre-IPO technology companies such as Workday (as well as public clients including Zynga, Netflix and Nvidia). Previously, Sarah worked with Casa Systems (NASDAQ:CASA), a publicly traded technology company that develops a software-centric networking platform for cable and mobile service providers.

She is an advocate for STEM education for women and the underserved. She has taught Marketing in the Wharton Undergraduate Program and served as a teaching fellow in lower-income high schools for the Philadelphia World Affairs Council. Sarah has four degrees from the Wharton School and the University of Pennsylvania. She is part of Linkedin’s Next Wave and the Forbes’ 30 Under 30.

Rohit Tripathi

GM & Head of ProductsSAP Digital Interconnect Rohit TripathiGM & Head of ProductsSAP Digital Interconnect

Rohit TripathiGM & Head of ProductsSAP Digital InterconnectDay 14:30 - 5:30pmUsing intelligent connectivity and AI to transform the world of IoT (Slides)We are amidst significant improvements in sensors and device capabilities coupled with enhancements in AI together with promise of low latency, high speed connectivity and availability of options e.g. 5G, NB-IoT. The confluence of these three trends has the potential to transform and simplify the world of IoT. This talk will explore these trends and the related impact to the world of IoT.

Speaker BioRohit Tripathi is Head of Products and Go-to-Market for SAP Digital Interconnect and brings with him over 20 years of experience in software and business operations.

In his current role, Rohit focuses on bringing to market value-added products and solutions that help SAP Digital Interconnect customers get more engaged, secure, and gather actionable insights in the Digital World. Rohit has been an author of CIO guides on using Big Data technologies.

Prior to joining SAP, Rohit was with The Boston Consulting Group where he advised senior executives of Fortune 500 companies on business strategy and operations.

In his current role, Rohit focuses on bringing to market value-added products and solutions that help SAP Digital Interconnect customers get more engaged, secure, and gather actionable insights in the Digital World. Rohit has been an author of CIO guides on using Big Data technologies.

Prior to joining SAP, Rohit was with The Boston Consulting Group where he advised senior executives of Fortune 500 companies on business strategy and operations.

Long Lin

Director of AIElectronic Arts Long LinDirector of AIElectronic Arts

Long LinDirector of AIElectronic ArtsDay 22:00 - 2:50pmAI in Gaming (Slides)Games have been leveraging AI since the 1950s, when people built a rules-based AI engine that played tic-tac-toe. With technological advances over the years, AI has become increasingly popular and widely used in the gaming industry. The typical characteristics of games and game development makes them an ideal playground for practicing and implementing AI techniques, especially deep learning and reinforcement learning. Most games are well scoped; it is relatively easy to generate and use the data; and states/actions/rewards are relatively clear. In this talk, I will show a couple of use cases where ML/AI helps in-game development and enhances player experience. Examples include AI agents playing game and services that provide personalized experience to players.

Speaker BioLong Lin, Director of Engineering @ Data & AI Team from Electronic Arts, where he leads the overall technical aspect of Player Profile Service, Player Relationship Management Platform, Recommendation Engine, Experimentation platform, and AI Agent & Simulation. Prior to EA, Long worked at WalmartLabs and eBay, where he has led R&D effort of Ad Platform, Customer Profile Service and multiple E-Commerce related services. Long is an expert in data engineering and applied machine learning, as well as live services.

Mark Moore

Engineering DirectorUber

Yuandong Tian

Research ManagerFacebook AI Research Yuandong TianResearch ManagerFacebook AI Research

Yuandong TianResearch ManagerFacebook AI ResearchDay 22:00 - 2:50pmDeep Reinforcement Learning Framework for Games (Slides)Deep Reinforcement Learning (DRL) has made strong progress in many tasks, such as board games, robotics, navigation, neural architecture search, etc. I will present our recent open-sourced DRL frameworks to facilitate game research and development. Our framework is scalable so we can can reproduce AlphaGoZero and AlphaZero using 2000 GPUs, achieving super-human performance of Go AI that beats 4 top-30 professional players. We also show usability of our platform by training agents in real-time strategy games, and show interesting behaviors with a small amount of resource.

Speaker BioYuandong Tian is a Research Scientist and Manager in Facebook AI Research, working on deep reinforcement learning and its applications in games, and theoretical analysis of deep models. He is the lead scientist and engineer for ELF OpenGo and DarkForest Go project. Prior to that, he was a researcher and engineer in Google Self-driving Car team in 2013-2014. He received Ph.D in Robotics Institute, Carnegie Mellon University on 2013, Bachelor and Master degree of Computer Science in Shanghai Jiao Tong University. He is the recipient of 2013 ICCV Marr Prize Honorable Mentions.

Sumit Gupta

VP of AIIBM Sumit GuptaVP of AIIBM

Sumit GuptaVP of AIIBMDay 21:00 - 2:00pmAI for the Enterprise (Slides)The use of AI for voice search and image recognition is talked about often. Enterprises, however, have different challenges and requirements. In this talk, we will focus on talking about use cases in the enterprise and challenges in building out AI solutions. We will talk about how an Auto-machine learning software for videos and images called PowerAI Vision enables quick AI model training & deployment for various enterprise use cases.

Speaker BioSumit Gupta is VP, AI, Machine Learning, and HPC in the IBM Cognitive Systems business. Sumit leads the business strategy & software and hardware products for machine learning, deep learning, & HPC. Prior to IBM, Sumit was the general manager of the AI & GPU accelerated data center business at NVIDIA and was central in building that business from the ground-up to what is now a multi-billion dollar business for NVIDIA. Sumit has a Ph.D. in CS from UC, Irvine, and a BS in EE from IIT Delhi.

Alex Ermolaev

Director of AIChange Healthcare Alex ErmolaevDirector of AIChange Healthcare

Alex ErmolaevDirector of AIChange HealthcareDay 22:50 - 3:10pmMajor Applications of AI in Healthcare (Slides)The latest AI advances have the potential to massively improve our health and well being. However, most of the work is yet to be done. In this talk, we will explore the most important opportunities for AI in healthcare. For example, we will explore how AI can diagnose major life-threatening conditions even before those conditions emerge. We will talk about AI ability to recommend dramatically more effective and less harmful treatment plans based on AI understanding of patient's medical history and current conditions. Finally, we will talk about AI role in making our healthcare system effective and affordable for everyone.

Speaker BioAlex Ermolaev worked on AI applications on and off ever since he got his original degree in this subject more than 20 years. His experience includes enterprise AI, AI platforms/tools, NLP, imaging and self-driving cars. Alex currently works as Director of AI for Change Healthcare – one of the largest healthcare technology companies in the world.

Samir Kumar

Managing DirectorM12 Samir KumarManaging DirectorM12

Samir KumarManaging DirectorM12

Ankit Jain

Founding PartnerGoogle Gradient Ventures Ankit JainFounding PartnerGoogle Gradient Ventures

Ankit JainFounding PartnerGoogle Gradient Ventures

Yoav Samet

Managing PartnerStageOne Ventures Yoav SametManaging PartnerStageOne Ventures

Yoav SametManaging PartnerStageOne VenturesSpeaker BioManaging Partner at StageOne Ventures, looking to partner with great tech entrepreneurs at the inception/early stage.

Over 20 years of experience leading strategy, M&A, venture capital investments, and R&D in the technology industry.

Led Cisco’s corporate development activities (M&A, Investments, and Strategy) covering about a third of Cisco’s business, including Cisco's Service Provider, Mobility, and software-enabled Services & Solutions businesses, as well as regional corporate development coverage for Europe, Israel, and Emerging Markets. Led multiple company acquisitions for Cisco, and managed a $750m portfolio of Cisco's equity investments including over 50 direct minority investments, as well as LP positions with over 20 venture capital funds worldwide. Earlier background in venture capital, operational management, entrepreneurship, and software development leadership roles.

Specialties: Venture Capital, M&A, Corporate Development, Business Development, Early Stage Investments, Corporate Strategy, Inorganic Growth Strategy, Networking Equipment & Telecom Infrastructure, Mobile Infrastructure, Security Software & Services, Growth Technology Investing., Emerging Markets, Fund Formation, Global Innovation, Video & Imaging Software, Management, Leadership.

Over 20 years of experience leading strategy, M&A, venture capital investments, and R&D in the technology industry.

Led Cisco’s corporate development activities (M&A, Investments, and Strategy) covering about a third of Cisco’s business, including Cisco's Service Provider, Mobility, and software-enabled Services & Solutions businesses, as well as regional corporate development coverage for Europe, Israel, and Emerging Markets. Led multiple company acquisitions for Cisco, and managed a $750m portfolio of Cisco's equity investments including over 50 direct minority investments, as well as LP positions with over 20 venture capital funds worldwide. Earlier background in venture capital, operational management, entrepreneurship, and software development leadership roles.

Specialties: Venture Capital, M&A, Corporate Development, Business Development, Early Stage Investments, Corporate Strategy, Inorganic Growth Strategy, Networking Equipment & Telecom Infrastructure, Mobile Infrastructure, Security Software & Services, Growth Technology Investing., Emerging Markets, Fund Formation, Global Innovation, Video & Imaging Software, Management, Leadership.

Lukasz Kaiser

Staff Research ScientistGoogle Brain Lukasz KaiserStaff Research ScientistGoogle Brain

Lukasz KaiserStaff Research ScientistGoogle BrainSpeaker BioLukasz joined Google in 2013 and is currently a senior Research Scientist in the Google Brain Team in Mountain View, where he works on fundamental aspects of deep learning and natural language processing. He has co-designed state-of-the-art neural models for machine translation, parsing and other algorithmic and generative tasks and co-authored the TensorFlow system and the Tensor2Tensor library. Before joining Google, Lukasz was a tenured researcher at University Paris Diderot and worked on logic and automata theory. He received his PhD from RWTH Aachen University in 2008 and his MSc from the University of Wroclaw, Poland.

Ni Lao

Chief Scientist and Co-FounderMosaix.ai Ni LaoChief Scientist and Co-FounderMosaix.ai

Ni LaoChief Scientist and Co-FounderMosaix.aiSpeaker BioNi Lao, Chief scientist and co-founder, Mosaix.ai (picture attached) Biograph: Dr. Ni Lao, Chief scientist and co-founder of Mosaix.ai, is an expert in Knowledge Graph (KG) and weakly supervized Natural Language Understanding (NLU). He is well known for his work on large scale inference for the CMU Never-Ending Language Learning (NELL) project, and Google Knowledge Vault project. He recently led research projects applying innovative reinforcement learning approaches to achieve new state-of-the-art in weakly supervized NLU tasks. His past research has contributed to Google’s KG and search-based question answering products.

T.M. Ravi

Director and FounderThe Hive T.M. RaviDirector and FounderThe Hive

T.M. RaviDirector and FounderThe HiveSpeaker BioT. M. Ravi is Managing Director and Co-founder of The Hive (www.hivedata.com). The Hive based in Palo Alto, CA is a venture fund and co-creation studio for Artificial Intelligence (AI) powered startups. The Hive engages with entrepreneurs and corporations to create companies focused on data and AI driven applications in the enterprise and different industry segments. The Hive also has a presence in India and Brazil. Ravi is a frequent speaker at conferences on the topics of AI, enterprise transformation and innovation. Ravi has a successful track record as a serial entrepreneur and operating executive. He has helped start over 25 startups including three where he was founder & CEO: Mimosa Systems (acquired by Iron Mountain), Peakstone Corporation, and Media Blitz (acquired By Cheyenne Software). Ravi was also CMO for Iron Mountain, VP of Marketing at Computer Associates (CA) and VP at Cheyenne Software. Ravi earned a MS and PhD from UCLA and a Bachelors of Technology from IIT, Kanpur, India. He is on the board of Montalvo Art Center based in Saratoga, CA.

Gregory La Blanc

Faculty DirectorHaas School of Business Gregory La BlancFaculty DirectorHaas School of Business

Gregory La BlancFaculty DirectorHaas School of Business

Fang Yuan

Vice PresidentBaidu Ventures Fang YuanVice PresidentBaidu Ventures

Fang YuanVice PresidentBaidu VenturesSpeaker BioFang Yuan is a VP of investments at Baidu Ventures (based in SF), focusing on AI & Robotics at the seed and Series A stages. Baidu Ventures is the non-strategic investment arm of Baidu, launched in early 2017 with a $200MM first fund. Its particular focus is on AI and robotics applied to specific industry verticals such as healthcare, transportation, agriculture, retail, etc. Baidu Ventures has made ~35 investments in North America.

Vijay Reddy

InvestorIntel Capital Vijay ReddyInvestorIntel Capital

Vijay ReddyInvestorIntel CapitalSpeaker BioVijay Reddy leads investments in Artificial Intelligence platforms, algorithms, infrastructure, as well as application of AI in computer vision, robotics, autonomous systems, industrial and other adjacent areas. Vijay is a board observer and/or has responsibility for several portfolio companies including Matroid, MightyAI, AEYE, Cognitive Scale, Paperspace etc. Prior to joining Intel Capital, Vijay held leadership business development and product management positions in communications, software and wireless domains. He began his career as a researcher and an entrepreneur in wireless and software engineering. Vijay received his MBA from Chicago Booth and is a Kauffman Fellow

Mohan Reddy

CTOThe Hive Mohan ReddyCTOThe Hive

Mohan ReddyCTOThe HiveOur Topics

Video Understanding

How to apply deep learning to understand videos? What are the advances in computer vision?

Natural Language Processing

How has deep learning revolutionized natural language processing? What applications can we build today?

Robots

What is the status of robots? How do they become smarter?

Drones

A look at the future of autonomous drones and how they will transform our life.

Deep Learning Breakthrough

The major breakthroughs in deep learning algorithm and their implications.

AI in Healthcare

A look at the application of machine learning in Healthcare industry.

AI in Finance

The growing presence of machine learning in financing.

Edge Computing

How will edge computing impact the industry?

Games

The growing presence of deep learning in game play and its impact for future games.

Internet Of Things

How do we apply deep learning to understand data from connected devices?

AI in Enterprises

A look at future AI applications in enterprises.

Sign up for news update

Schedule

In this three-day AI conference at San Jose, we bring together leading scientists and practitioners who have deployed large-scale AI products. You will gain a front-row seat of the frontiers of AI and machine learning, and have opportunities to network with others who are enthusiastic about AI technologies and products.

View Schedule SummaryDay 1November 9, 2018

8:50 - 9:00am

Opening Remarks

by Junling Hu, Conference Chair

by Junling Hu, Conference Chair

Opening Remarks

by Junling Hu, Conference Chair

by Junling Hu, Conference Chair

9:00 - 9:50am

Keynote

Ilya SutskeverCo-Founder & DirectorOpenAI

Ilya SutskeverCo-Founder & DirectorOpenAIKeynote

Ilya Sutskever : Recent Advances in Deep Learning and AI from OpenAI (Slides)

I will present several advances in deep learning from OpenAI. First, I will present OpenAI Five, a neural network that learned to play on par with some of the strongest professional Dota 2 teams in the world in an 18-hero version of the game. Next, I will present Dactyl, a human-like robot hand trained entirely in simulation with reinforcement learning that has achieved unprecedented dexterity on a physical robot. I will also present our results on unsupervised learning in language, that show that pre-training and finetuning can achieve a significant improvement over state of the art. Finally, I will present an overview of the historical progress in the field.

10:00 - 11:30am

Video Understanding

Matt FeiszliResearch ManagerFacebook

Matt FeiszliResearch ManagerFacebook Divya JainDirectorAdobe

Divya JainDirectorAdobe Roland MemisevicChief ScientistTwentyBN

Roland MemisevicChief ScientistTwentyBNmoderator:

Vijay ReddyInvestorIntel Capital

Vijay ReddyInvestorIntel CapitalVideo Understanding

Matt Feiszli : Video Understanding: Modalities, Time, and Scale (Slides)

I will discuss the state of the art of video understanding, particularly its research and applications at Facebook. I will focus on two active areas: multimodality and time. Video is naturally multi-modal, offering great possibility for content understanding while also opening new doors like unsupervised and weakly-supervised learning at scale. Temporal representation remains a largely open problem; while we can describe a few seconds of video, there is no natural representation for a few minutes of video. I will discuss recent progress, the importance of these problems for applications, and what we hope to achieve.

Divya Jain : Video Summarization (Slides)

As video content is becoming mainstream, video summarization is becoming a hot research topic in academia and industry. Video thumbnail generation and summarization has been worked on for years, but deep learning and reinforcement learning is changing the landscape and emerging as the winner for optimal frame selection. Recent advances in GANs are improving the quality, aesthetics and relevancy of the frames to represent the original videos. Come join this session to get an understanding of various challenges and emerging solutions around video summarization.

Roland Memisevic : Teaching machines common sense understanding of the world around them (Slides)

In this talk, I will introduce an AI system that interacts with you while "looking" at you - to understand your behaviour, your surroundings and the full context of the engagement. At the core of this technology is a crowd acting-platform, that allows humans to engage with and teach the system about everyday aspects of our lives and of our physical world. Combining this with deep neural networks makes it possible to generate a high degree human-like "awareness" of everyday scenes and situations. I will describe how this technology allows devices, ranging from information kiosks to cars, to engage with humans more naturally and instinctively, and how TwentyBN uses this ability to create commercial value for our customers.

11:30 - 12:00pm

AI in Security

Rajarshi GuptaHead of AIAvast

Rajarshi GuptaHead of AIAvastAI in Security

Rajarshi Gupta : Security is AI’s biggest challenge, and AI is Security’s greatest opportunity (Slides)

The progress of AI in the last decade has seemed almost magical. But we will discuss the unique challenges posed by Security and what makes this domain the biggest challenge for AI. Reporting from the frontlines, we will describe the deployment of large-scale production-grade AI systems to combat security breaches, using lessons learned at Avast from defending over 400 million consumers every single day. Topics will cover the recent AI advancements in file-based anti-malware solutions, behavior-based on-device solutions, and network-based IoT security solutions.

12:00 - 1:00pm

Lunch

1:00 - 1:30pm

Afternon Keynote

Percy LiangAssistant ProfessorStanford University

Percy LiangAssistant ProfessorStanford UniversityAfternon Keynote

Percy Liang : Pushing the Limits of Machine Learning

In recent years, machine learning has undoubtedly been hugely successful in driving progress in AI applications. However, as we will explore in this talk, even state-of-the-art systems have "blind spots" which make them generalize poorly out of domain and render them vulnerable to adversarial examples. We then suggest that more unsupervised learning settings can encourage the development of more robust systems. We show positive results on two tasks: (i) text style and attribute transfer, the task of converting a sentence with one attribute (e.g., sentiment) to one with another; and (ii) solving SAT instances (classical problems requiring logical reasoning) using end-to-end neural networks.

1:30 - 2:30pm

Deep Learning Breakthrough

Quoc LeResearch ScientistGoogle Brain

Quoc LeResearch ScientistGoogle Brain Sumit GulwaniPartner Research ManagerMicrosoft

Sumit GulwaniPartner Research ManagerMicrosoftDeep Learning Breakthrough

Quoc Le : Using Machine Learning to Automate Machine Learning (Slides)

Traditional machine learning systems are hand-designed and tuned by machine learning experts. To scale up the impact of machine learning to many real-world applications, we must figure out a way to automate the designing process of these pipelines. In this talk, I will discuss the use of machine learning to automate the process of designing neural architectures and data augmentation strategies (Neural Architecture Search and AutoAugment).

Sumit Gulwani : Programming by Examples (Slides)

Programming by examples (PBE) is a new frontier in AI that enables users to create scripts from input-output examples. A killer application is in the space of data wrangling to automate tasks like string/number/date transformations (e.g., converting “FirstName LastName” to “LastName, FirstName”), column splitting, table extraction from log-files, webpages, and PDFs, normalizing semi-structured spreadsheets into structured tables, transforming JSON from one format to another, etc. This presentation will educate the audience about this new PBE-based programming paradigm: its applications, form factors inside different products, and the science behind it.

2:30 - 2:45pm

Industry talk

Roger RobertsPartnerMcKinsey & Company

Roger RobertsPartnerMcKinsey & CompanyIndustry talk

Roger Roberts : AI for Societal Good

Beyond its transformative role in business and the economy, AI is starting to be deployed for uses that benefit individuals and society, from helping detect cancers to tailoring education for autistic students or predicting which homes have lead in their water pipes. This session, based on McKinsey Global Institute’s analysis of about 160 social impact use cases, will examine domains where AI could be deployed for the benefit of society, factors that limit its deployment, and risks that will need to be mitigated if the benefits are to be realized.

2:45 - 3:15pm

Coffee Break

3:15 - 4:30pm

AI in Finance

Yazann RomahiChief Investment OfficerJP Morgan Chase

Yazann RomahiChief Investment OfficerJP Morgan Chase Li DengChief AI OfficerCitadel

Li DengChief AI OfficerCitadel Ashok SrivastavaChief Data OfficerIntuit

Ashok SrivastavaChief Data OfficerIntuitmoderator:

Fang YuanVice PresidentBaidu Ventures

Fang YuanVice PresidentBaidu VenturesAI in Finance

Yazann Romahi : The Pitfalls of Using AI in Trading Strategies (Slides)

Because of the success of momentum based strategies, most AI practitioners come into finance thinking they can achieve easy wins by applying AI to time series analysis. We outline how this can be a trap, and other common misconceptions about AI in finance. We discuss the value of new sources of data and how we have used them successfully. By way of example we walk through an application of natural language processing to enhance our equity long/short and event driven hedge fund strategies.

Li Deng : From Modeling Speech and Language to Modeling Financial Markets (Slides)

I will first survey how deep learning has disrupted speech and language processing industries since 2009. Then I will draw connections between the techniques for modeling speech and language and those for financial markets. Finally, I will address three unique technical challenges to financial investment.

Ashok Srivastava : Using AI to Solve Complex Economic Problems (Slides)

Nearly half of all small businesses fail within their first 5 years. However, AI-driven solutions can help solve complex economic problems for consumers and small businesses like missed bill payments, insufficient capital, overinvestment in fixed assets, and more.

Ashok Srivastava discusses technology's role in solving economic problems and details how Intuit is using its unrivaled financial dataset to power prosperity around the world. Leveraging technology enablers like deep learning, natural language processing, and automated reasoning and combining with a delightful end-user experience and sophisticated UX, Intuit is using technology to help its users have more confidence in their financial management.

Ashok Srivastava discusses technology's role in solving economic problems and details how Intuit is using its unrivaled financial dataset to power prosperity around the world. Leveraging technology enablers like deep learning, natural language processing, and automated reasoning and combining with a delightful end-user experience and sophisticated UX, Intuit is using technology to help its users have more confidence in their financial management.

4:30 - 5:30pm

Internet of Things

Sameer SharmaGlobal GM IoTIntel

Sameer SharmaGlobal GM IoTIntel Rohit TripathiGM & Head of ProductsSAP Digital Interconnect

Rohit TripathiGM & Head of ProductsSAP Digital Interconnect Himagiri MukkamalaGM & SVPARM

Himagiri MukkamalaGM & SVPARMmoderator:

Gregory La BlancFaculty DirectorHaas School of Business

Gregory La BlancFaculty DirectorHaas School of BusinessInternet of Things

Sameer Sharma : Going for the Edge - AI @ IOT

IOT implementations have moved from “Connect the Unconnected” to Smart Connected devices and solutions. The next big inflection point will be around autonomous systems. AI + Data will play a pivotal role in enabling this autonomy. This will play out in Intelligent factories, cities and buildings. We will use Computer Vision as an example to walk through this imminent step-function transition.

Rohit Tripathi : Using intelligent connectivity and AI to transform the world of IoT (Slides)

We are amidst significant improvements in sensors and device capabilities coupled with enhancements in AI together with promise of low latency, high speed connectivity and availability of options e.g. 5G, NB-IoT. The confluence of these three trends has the potential to transform and simplify the world of IoT. This talk will explore these trends and the related impact to the world of IoT.

Himagiri Mukkamala : The fifth wave – AI driven computing for IoT (Slides)

We are entering an era of data-driven computing – IoT will collect, 5G will transport it and ML will process it. The combination of these forces coming together will bring transformation unlike anything seen before. 5G alone is expected to bring economic impact akin to the industrial revolution. The IoT is predicated to bring trillions to many different industries. The fifth wave represents massive opportunity for the ecosystem to create new businesses and drive economic growth. Those 1T devices will not just be connected, they will be intelligent - a paradigm shift enabled by ML in every IoT end point. The bigger impact of AI is already happening today as machine learning is becoming part of our daily lives in billions of tiny moments. Machine Learning will be needed to handle the massive amount of data that will be part of the fifth wave. Data itself isn’t always valuable if the volume obscures the important info. We can use ML at the edge to identify the critical data that should be shared. This is why we as an industry need to focus on the edge for the future of AI. We’ve worked hard on moving data to processing somewhere else, but for a trillion devices, that approach isn’t sustainable. Moving, processing and storing data all has costs in terms of efficiency and security. We need to shift our focus to processing the data where it is collected and used driving more value from IoT.

5:30 - 6:00pm

Keynote

Kai-Fu LeeChairman & CEOSinovation Ventures

Kai-Fu LeeChairman & CEOSinovation VenturesKeynote

Kai-Fu Lee : The Era of Artificial Intelligence (Slides)

In this talk, I will talk about the four waves of Artificial Intelligence (AI) , and how AI will permeate every part of our lives in the next decade. I will also talk about how this will be different from previous technology revolutions -- it will be faster and be driven by not one superpower, but two (US and China). AI will add $16 trillion to our global GDP, but also cause many challenges that will be hard to solve. I will talk in particular about AI replacing routine jobs -- the consequences, the proposed solutions that don't work (such as UBI), and end with a blueprint of co-existence between humans and AI.

7:00 - 9:00pm

Dinner Banquet

Jay YagnikVPGoogle AI

Jay YagnikVPGoogle AI

Dinner Banquet

Keynote speechJay Yagnik : A History Lesson on AI (Slides)

We have reached a remarkable point in history with the evolution of AI, from applying this technology to incredible use cases in healthcare, to addressing the world's biggest humanitarian and environmental issues. Our ability to learn task-specific functions for vision, language, sequence and control tasks is getting better at a rapid pace. This talk will survey some of the current advances in AI, compare AI to other fields that have historically developed over time, and calibrate where we are in the relative advancement timeline. We will also speculate about the next inflection points and capabilities that AI can offer down the road, and look at how those might intersect with other emergent fields, e.g. Quantum computing.

Banquet and Networking

The evening consists of sponsor pitch, and presentation from Jay Yagnik, VP of Google AI. The banquet is a full course sit down dinner with wine, and a chance to interact with the speakers on an one to one basis. You will have the opportunity to meet and mingle with other guests, build connections, raise company profile, form potential business and research partnerships.

Day 2November 10, 2018

9:00 - 9:05am

Day 2 Opening Remark

by Apoorv Saxena, Conference Co-Chair

by Apoorv Saxena, Conference Co-Chair

Apoorv SaxenaGlobal Head of AIJPMorgan Chase

Apoorv SaxenaGlobal Head of AIJPMorgan ChaseDay 2 Opening Remark

by Apoorv Saxena, Conference Co-Chair

by Apoorv Saxena, Conference Co-Chair

9:05 - 9:40am

Morning Keynote

Melissa GoldmanChief Information OfficerJP Morgan Chase

Melissa GoldmanChief Information OfficerJP Morgan ChaseMorning Keynote

Melissa Goldman : AI & Finance (Slides)

AI in finance is having wide-ranging impact and solving some of the most critical societal problems. The talk gives overview of the opportunities of applying AI in finance with specific examples and highlights some of the unique challenges financial services firms face in deploying AI at scale.

9:40 - 10:40am

Robots

Pieter AbbeelProfessorUC Berkeley

Pieter AbbeelProfessorUC Berkeley Mario MunichSVP TechnologyiRobot

Mario MunichSVP TechnologyiRobotmoderator:

Mohan ReddyCTOThe Hive

Mohan ReddyCTOThe HiveRobots

Pieter Abbeel : Deep Learning for Robotics

Programming robots remains notoriously difficult. Equipping robots with the ability to learn would by-pass the need for what otherwise often ends up being time-consuming task specific programming. This talk will describe recent progress in deep reinforcement learning (robots learning through their own trial and error), in apprenticeship learning (robots learning from observing people), and in meta-learning for action (robots learning to learn). This work has led to new robotic capabilities in manipulation, locomotion, and flight.

Mario Munich : Consumer robotics: embedding affordable AI in everyday life (Slides)

The availability of affordable electronics components, powerful embedded microprocessors, and ubiquitous internet access and WiFi in the household has enabled a new generation of connected consumer robots. In 2015, iRobot launched the Roomba 980, introducing intelligent visual navigation to its successful line of vacuum cleaning robots. In 2018, iRobot launched the Roomba i7, equipped with the latest mapping and navigation technology that provides spatial information to the broader ecosystem of connected devices in the home. In this talk, I will describe the challenges and the potential of introducing consumer robots capable of developing spatial context by exploring the physical space of the home, and I will elaborate on the impact of AI in the future of robotics applications. Moreover, I will describe our vision of the Smart Home, an AI-powered home that maintains itself and magically just does the right thing in anticipation of occupant needs. This home will be built on an ecosystem of connected and coordinated robots, sensors, and devices that provides the occupants with a high quality of life by seamlessly responding to the needs of daily living – from comfort to convenience to security to efficiency.

10:40 - 11:20pm

Drones

Arnaud ThiercelinHead of R&DDJI

Arnaud ThiercelinHead of R&DDJI Mark MooreEngineering DirectorUber

Mark MooreEngineering DirectorUberDrones

Industry experts from companies that are actively developing drones come together to discuss their work. What is the status of drones? Our speakers are Arnaud Thiercelin, Head of R&D of DJI, Mark Moore, Engineering Director of Uber.

Arnaud Thiercelin : AI in the Sky (Slides)

Mark Moore : Uber Elevate (Slides)

11:20 - 11:45pm

Distributed Deep Learning

Anima AnandkumarDirector of Machine Learning ResearchNVIDIA

Anima AnandkumarDirector of Machine Learning ResearchNVIDIADistributed Deep Learning